In the previous post, we established the foundation of our data sovereignty: an isolated, highly secure PostgreSQL database running on a dedicated Oracle Cloud ARM instance. We escaped the black-box limitations of third-party platforms like Myfxbook, securing a private vault capable of storing millions of rows of live execution data and raw JSON API responses.

However, a database sitting behind a strict firewall is entirely passive. It cannot reach out to the markets and grab your trading data. The data must be pushed to it.

This presents a critical architectural problem. Your execution servers—the Hyonix Windows VPS running your MetaTrader 5 Expert Advisors, and the Vultr Ubuntu node running your asynchronous Python bots—need to transmit their execution logs to the database. The amateur approach is to simply open the PostgreSQL port (5432) to the public internet and hardcode your database credentials directly into your MT5 EA or Python script.

Doing this is equivalent to leaving the vault door wide open. The moment a database port is exposed to the internet, automated botnets will aggressively scan and brute-force it. Furthermore, embedding your master database password inside an MT5 EA compiled file is a severe security risk. If that EA is ever decompiled or compromised, the attacker gains complete read/write access to your entire financial history.

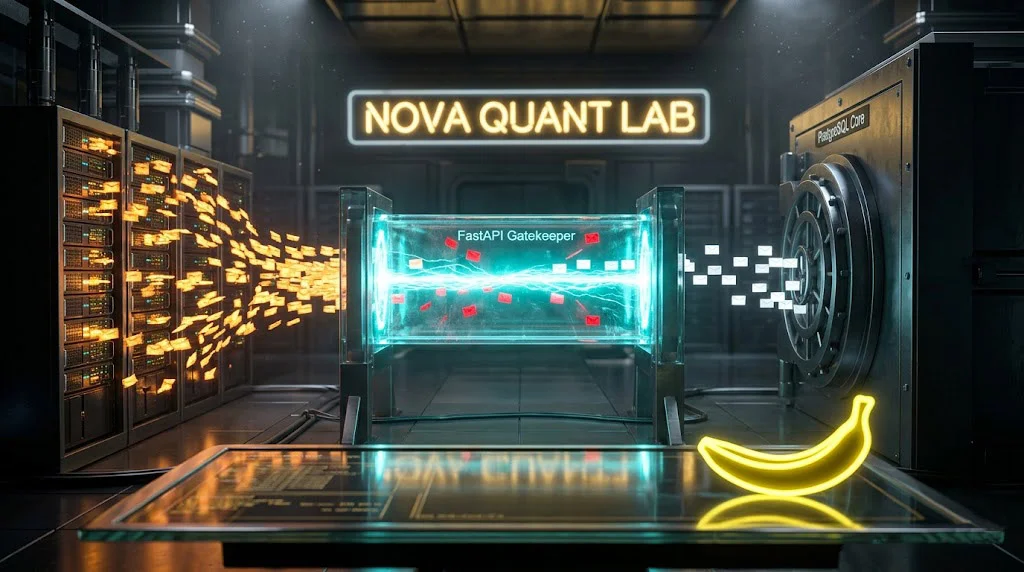

We need a secure middleman. We need a highly performant, lightweight gatekeeper that sits in front of the database, listens for incoming trade data, verifies the authenticity of the sender, formats the data correctly, and safely writes it to the ledger. In the modern quantitative tech stack, the undisputed tool for this job is FastAPI.

In this post, I am going to walk you through the exact architecture and code required to build a zero-trust Python API backend. We will deploy it on our Oracle server, write the strict validation models, and most importantly, construct the exact webhook triggers required to blast data from both Python CCXT bots and MQL5 environments without introducing execution lag.

Section 1: The Zero-Trust Gatekeeper Architecture

Our architecture is strictly defined by the “Separation of Concerns.” The execution servers only execute. The database server only stores. The API sits exactly between them.

- The Trigger: An order is filled on Binance via your Python bot, or a Take Profit is hit on Forex.com via your MT5 EA.

- The Payload: The execution script immediately packages the trade details (entry price, exit price, PnL, timestamp) into a standard JSON payload.

- The Webhook: The execution script fires a single, asynchronous HTTP POST request across the internet to our Oracle ARM server’s IP address. Embedded in the hidden headers of this request is a secret API key.

- The Gatekeeper (FastAPI): The FastAPI application intercepts the request. It first checks the header. If the API key is missing or incorrect, it instantly drops the packet with a 401 Unauthorized error. If authenticated, it passes the JSON payload to Pydantic.

- The Validator (Pydantic): Pydantic inspects the JSON payload. If the execution script accidentally sent a string (“Bitcoin”) instead of a float (65000.50) for the price, Pydantic rejects it. This ensures malformed data never poisons our database.

- The Write: Once validated, SQLAlchemy translates the Python object into a raw SQL query and safely inserts it into our PostgreSQL

live_tradestable.

Section 2: Why FastAPI is the Professional Standard

If you have built web applications before, you might be wondering why we are not using Django or Flask. Django is a massive, monolithic framework designed for building complete web platforms with user authentication, admin panels, and template rendering. It is incredibly bloated for what we need. Flask is lightweight, but it is fundamentally synchronous and lacks native data validation.

FastAPI was built specifically for modern, high-performance API architectures.

- Asynchronous by Design: Like our CCXT execution bots, FastAPI natively supports

asyncandawait. It can handle thousands of incoming trade webhooks simultaneously without blocking the main thread. - Pydantic Integration: It uses Pydantic for strict data validation out of the box. You define the exact shape of your data using Python type hints, and FastAPI handles the parsing and error generation automatically.

- Raw Speed: Built on Starlette and Uvicorn, its performance rivals NodeJS and Go, making it one of the fastest Python frameworks in existence. When your algorithmic fleet is executing high-frequency arbitrage, your API must process the webhook and return a 200 OK status in milliseconds.

Section 3: Setting Up the Backend Environment

Log into your Oracle Cloud ARM instance (managed via aaPanel, as discussed previously). We will utilize the aaPanel Python Manager to create an isolated virtual environment.

We need four core libraries for our backend engine:

fastapi: The core web framework.uvicorn: The lightning-fast ASGI server that runs FastAPI.sqlalchemy: The Object Relational Mapper (ORM) to safely interact with PostgreSQL.psycopg2-binary: The underlying PostgreSQL database adapter for Python.

Once your virtual environment is active, install the dependencies:

Bash

pip install fastapi uvicorn sqlalchemy psycopg2-binary pydantic

Section 4: Writing the Strict Pydantic Models

The most critical defensive layer of our API is the data model. If an MT5 EA bugs out and attempts to log a trade with a negative volume, or if a crypto bot sends a corrupted timestamp, the database will throw a fatal error. We must catch bad data before it touches PostgreSQL.

Create a file named schemas.py. This file dictates the absolute law of what our JSON payload must look like.

Python

from pydantic import BaseModel, Field

from typing import Optional, Any

from datetime import datetime

class TradePayload(BaseModel):

bot_name: str = Field(..., max_length=50)

exchange: str = Field(..., max_length=50)

asset_symbol: str = Field(..., max_length=20)

order_type: str = Field(..., max_length=10)

entry_time: datetime

exit_time: Optional[datetime] = None

entry_price: float = Field(..., gt=0)

exit_price: Optional[float] = None

position_size: float = Field(..., gt=0)

realized_pnl: Optional[float] = None

commission: Optional[float] = None

magic_number: Optional[int] = None

exchange_order_id: Optional[str] = None

raw_api_response: Optional[Any] = None

status: str = Field(default="OPEN")

Notice the strict parameters. entry_price and position_size must be strictly greater than zero (gt=0). Timestamps must be valid datetime objects. If the incoming webhook violates any of these rules, FastAPI automatically rejects it.

Section 5: Architecting the API Gatekeeper

Now we construct the actual server logic. Create a file named main.py. This script handles the server initialization, the security verification, and the database injection.

For security, we will hardcode a master API Key. In a massive enterprise environment, you would use AWS Secrets Manager or environment variables, but for a private, dedicated tracking server, a highly complex, 64-character randomized string stored in the script or a .env file is highly effective.

Python

from fastapi import FastAPI, Depends, HTTPException, status

from fastapi.security import APIKeyHeader

from sqlalchemy import create_engine, Column, Integer, String, Numeric, DateTime, BigInteger

from sqlalchemy.dialects.postgresql import JSONB

from sqlalchemy.orm import sessionmaker, declarative_base

from schemas import TradePayload

# --- 1. Security Configuration ---

API_KEY_NAME = "X-Quant-API-Key"

API_KEY_VALUE = "YOUR_64_CHARACTER_RANDOM_SECURE_STRING"

api_key_header = APIKeyHeader(name=API_KEY_NAME, auto_error=True)

def verify_api_key(api_key: str = Depends(api_key_header)):

if api_key != API_KEY_VALUE:

raise HTTPException(

status_code=status.HTTP_401_UNAUTHORIZED,

detail="Invalid API Key. Access Denied."

)

return api_key

# --- 2. Database Configuration ---

DB_URL = "postgresql://your_db_user:your_secure_password@localhost:5432/quant_live_ledger"

engine = create_engine(DB_URL)

SessionLocal = sessionmaker(autocommit=False, autoflush=False, bind=engine)

Base = declarative_base()

# Define the SQLAlchemy Model (Must match the DB schema from the previous post)

class TradeRecord(Base):

__tablename__ = "live_trades"

internal_id = Column(Integer, primary_key=True, index=True)

bot_name = Column(String(50), nullable=False, index=True)

exchange = Column(String(50), nullable=False)

asset_symbol = Column(String(20), nullable=False)

order_type = Column(String(10), nullable=False)

entry_time = Column(DateTime(timezone=True), nullable=False)

exit_time = Column(DateTime(timezone=True), index=True)

entry_price = Column(Numeric(18, 8), nullable=False)

exit_price = Column(Numeric(18, 8))

position_size = Column(Numeric(18, 8), nullable=False)

realized_pnl = Column(Numeric(18, 2))

commission = Column(Numeric(18, 4))

magic_number = Column(BigInteger)

exchange_order_id = Column(String(100))

raw_api_response = Column(JSONB)

status = Column(String(20), default="OPEN", index=True)

# --- 3. FastAPI Application Initialization ---

app = FastAPI(title="Nova Quant Data Ingestion Engine")

# Dependency to get DB session

def get_db():

db = SessionLocal()

try:

yield db

finally:

db.close()

# --- 4. The Ingestion Endpoint ---

@app.post("/api/v1/log_trade", status_code=201)

async def log_trade(payload: TradePayload, db: SessionLocal = Depends(get_db), api_key: str = Depends(verify_api_key)):

# Translate Pydantic model to SQLAlchemy ORM object

db_trade = TradeRecord(

bot_name=payload.bot_name,

exchange=payload.exchange,

asset_symbol=payload.asset_symbol,

order_type=payload.order_type,

entry_time=payload.entry_time,

exit_time=payload.exit_time,

entry_price=payload.entry_price,

exit_price=payload.exit_price,

position_size=payload.position_size,

realized_pnl=payload.realized_pnl,

commission=payload.commission,

magic_number=payload.magic_number,

exchange_order_id=payload.exchange_order_id,

raw_api_response=payload.raw_api_response,

status=payload.status

)

try:

db.add(db_trade)

db.commit()

db.refresh(db_trade)

return {"status": "success", "internal_id": db_trade.internal_id}

except Exception as e:

db.rollback()

raise HTTPException(status_code=500, detail=f"Database Write Failure: {str(e)}")

This script is the ultimate gatekeeper. It enforces the API key requirement on the /api/v1/log_trade endpoint. It parses the incoming JSON, ensures the data types are perfect, translates it into a SQL statement, and commits it. If anything fails, it rolls back the transaction to prevent database corruption.

To deploy this in production on your Oracle server, you use Uvicorn running behind a Gunicorn process manager, which ensures the API stays alive 24/7 and restarts automatically if the server reboots.

Bash

gunicorn main:app -w 4 -k uvicorn.workers.UvicornWorker --bind 0.0.0.0:8000

Section 6: Firing the Webhook from Python (CCXT)

With the backend active, we must configure our execution algorithms to transmit data.

For your Python crypto bots running on Vultr, firing the webhook is straightforward. However, the execution methodology is critical. You must use the aiohttp library to fire the request asynchronously. If you use the standard synchronous requests library, your entire trading loop will freeze and wait for the Oracle server to respond. In high-frequency arbitrage, a 50-millisecond freeze can cost you a profitable fill.

Here is the exact asynchronous logic to inject into your execution scripts after an order is filled:

Python

import aiohttp

import asyncio

from datetime import datetime, timezone

async def log_trade_to_backend(trade_data_dict):

url = "http://YOUR_ORACLE_IP:8000/api/v1/log_trade"

headers = {

"Content-Type": "application/json",

"X-Quant-API-Key": "YOUR_64_CHARACTER_RANDOM_SECURE_STRING"

}

async with aiohttp.ClientSession() as session:

try:

# We use fire-and-forget; we don't wait to read the response body to save latency.

async with session.post(url, json=trade_data_dict, headers=headers) as response:

if response.status != 201:

print(f"Failed to log trade. Status: {response.status}")

except Exception as e:

print(f"Webhook transmission failed: {e}")

# Example Usage inside your main CCXT execution loop:

# payload = {"bot_name": "StatArb_v3", "exchange": "Binance", ...}

# asyncio.create_task(log_trade_to_backend(payload))

By wrapping the webhook in asyncio.create_task(), the script fires the packet across the internet and immediately returns to scanning the order book, completely unaffected by network latency.

Section 7: The Brutal Reality of MQL5 WebRequests

Connecting a modern Python API to an MT5 Expert Advisor is notoriously painful. MQL5 is a strict, statically typed language akin to C++, and constructing raw HTTP POST requests with custom headers and JSON payloads requires meticulous formatting.

Unlike Python, MQL5 does not have a native, easy-to-use JSON builder. You must format the string manually. Furthermore, before your EA can send any data, you must manually whitelist your Oracle API IP address inside the MT5 terminal settings (Tools > Options > Expert Advisors > Allow WebRequest for listed URL). If you skip this step, MT5 will silently block the transmission for security reasons.

Here is the raw MQL5 function required to format your EA’s trade data and blast it to your FastAPI server. This should be triggered inside your OnTradeTransaction or OnTick logic whenever a position is closed.

C++

void SendTradeWebhook(string symbol, double entryPrice, double exitPrice, double volume, double pnl, long magicNumber) {

string url = "http://YOUR_ORACLE_IP:8000/api/v1/log_trade";

string cookie = NULL;

string headers = "Content-Type: application/json\r\n"

"X-Quant-API-Key: YOUR_64_CHARACTER_RANDOM_SECURE_STRING\r\n";

int timeout = 5000;

char post[], result[];

string resultHeaders;

// Constructing the JSON Payload manually in MQL5

string json = StringFormat(

"{\"bot_name\":\"MT5_Trend_Bot\",\"exchange\":\"Forex.com\",\"asset_symbol\":\"%s\",\"order_type\":\"SELL\",\"entry_time\":\"%s\",\"exit_time\":\"%s\",\"entry_price\":%f,\"exit_price\":%f,\"position_size\":%f,\"realized_pnl\":%f,\"magic_number\":%I64d,\"status\":\"CLOSED\"}",

symbol,

TimeToString(TimeCurrent(), TIME_DATE|TIME_SECONDS),

TimeToString(TimeCurrent(), TIME_DATE|TIME_SECONDS),

entryPrice,

exitPrice,

volume,

pnl,

magicNumber

);

StringToCharArray(json, post, 0, WHOLE_ARRAY, CP_UTF8);

// The infamous WebRequest execution

int res = WebRequest("POST", url, headers, timeout, post, result, resultHeaders);

if(res == -1) {

Print("Webhook Error in WebRequest. Error code: ", GetLastError());

Print("Did you add the URL to the allowed list in MT5 Options?");

} else if (res == 201) {

Print("Trade successfully logged to Oracle Database.");

} else {

Print("API Server rejected the payload. HTTP Status: ", res);

}

}

Note: MQL5 natively outputs dates with dots (e.g., 2026.04.05). To ensure strict compatibility with FastAPI’s Pydantic datetime validation, you may need to run a quick StringReplace(timeString, ".", "-"); to convert it into a standard ISO format before injecting it into the JSON payload.

This code forces MT5 to communicate with modern web infrastructure. It bypasses Myfxbook completely, sending your highly sensitive execution data straight into your private, secure vault.

The Final Blueprint

We have effectively built an institutional-grade data pipeline. Your distributed execution servers—whether they are hunting latency in Tokyo or running heavy Windows EAs in New York—are now securely streaming their results to a centralized, high-performance API gatekeeper. That gatekeeper validates the data and locks it into an unbreakable PostgreSQL ledger.

You now own your data. You are no longer reliant on third-party verification platforms, and your trading metrics are permanently secured on infrastructure that costs you absolutely zero dollars per month.

But raw database tables are unreadable to the human eye. In the final installment of this Custom Quant Dashboard series, we will extract this data and build the frontend. We will construct a visually striking, high-performance web dashboard hosted on the global edge using a Headless architecture, allowing you to display your true equity curves, Sharpe ratios, and algorithmic alpha to the world in real-time.