Welcome back to Nova Quant Lab.

In our journey through Season 3, we have successfully elevated our quantitative infrastructure from deterministic classical statistics to the probabilistic realm of Machine Learning. In Posts 10, 11, and 12, we engineered real-time features, trained a LightGBM classification engine, and forged it in the crucible of Purged K-Fold Cross-Validation. You now possess a highly resilient, lightning-fast AI that can recognize multi-dimensional order book imbalances.

However, as sophisticated as our Gradient Boosted Tree (GBDT) model is, it suffers from a fundamental philosophical blind spot: It has no innate concept of time.

A tree-based model looks at the market as a series of isolated, static photographs. Even when we engineer rolling features (like a 50-tick moving average), we are simply summarizing the past into a single number for the present. The model does not “feel” the sequence. It does not watch the movie; it only looks at the final frame.

But financial markets are not static. The market is a continuous, sequential river of human and algorithmic intentions. To capture the highest tier of alpha, we must deploy an architecture that can remember the past, understand the sequence of events, and read the “narrative” of the order book.

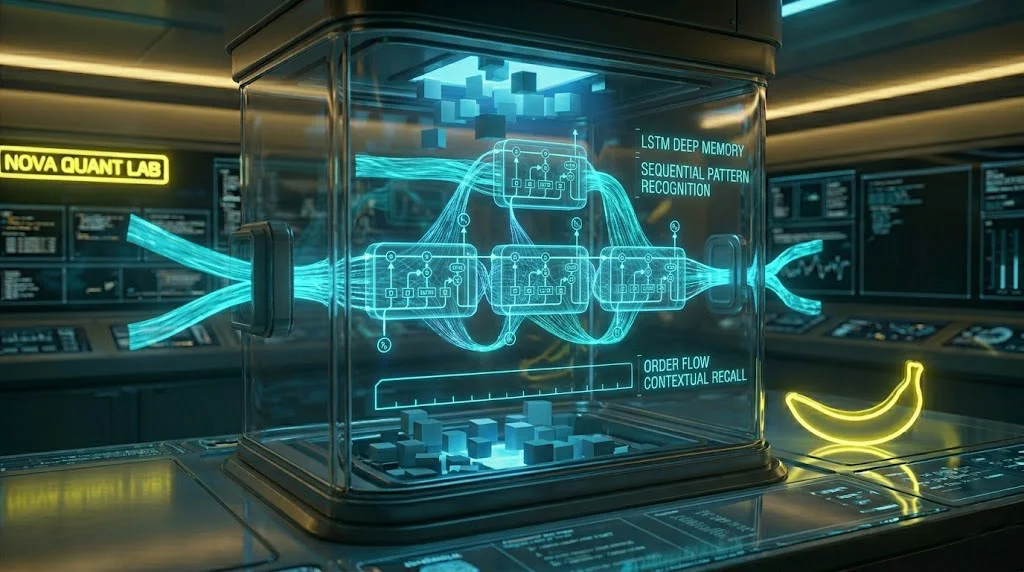

Today, we step into the deep waters of Neural Networks. We will explore the architecture of the Long Short-Term Memory (LSTM) network, understand how to reshape our data into three-dimensional tensors, and teach our machine to watch the movie of the market.

1. The Narrative of Liquidity: Why Sequence Matters

To understand why Deep Learning is necessary, consider a classic institutional manipulation tactic: Spoofing.

Imagine a large algorithmic trader wants to buy Bitcoin cheaply.

- At T=1, they place a massive, fake Limit Sell order (a spoof) 10 ticks above the current price.

- At T=2, retail trading bots see this massive sell wall, panic, and begin selling aggressively, driving the price down.

- At T=3, the institutional trader cancels the fake sell wall and instantly executes a massive Taker Buy order at the newly depressed price.

If a LightGBM model looks at the data at T=3, it only sees a massive Taker Buy order and a slightly depressed price. It misses the manipulation. It misses the setup.

An LSTM network, however, ingests the data sequentially. It processes T=1, remembers the massive sell wall, processes T=2, observes the retail panic, and at T=3, it recognizes the cancellation and the aggressive buy. The LSTM understands the narrative. It recognizes the “Spoof and Pump” sequence and can predict the subsequent violent upward mean-reversion with terrifying accuracy.

2. The Anatomy of Memory: How LSTMs Work

Standard Recurrent Neural Networks (RNNs) attempt to remember the past by feeding the output of the previous step into the input of the current step. However, standard RNNs suffer from the “Vanishing Gradient Problem”—they have the memory span of a goldfish. If a sequence is 50 ticks long, by the time the network reaches tick 50, it has completely forgotten what happened at tick 1.

The Long Short-Term Memory (LSTM) architecture, introduced by Hochreiter and Schmidhuber in 1997, solved this by introducing a complex internal structure called a “Cell State” and a series of “Gates.”

Think of the Cell State as a conveyor belt running straight down the entire sequence. It carries the long-term memory. The Gates are neural networks that decide what information should be added to the conveyor belt, and what information is no longer relevant and should be thrown away.

The Mathematics of the Gates

To truly be a quantitative engineer, you must understand the math under the hood. The LSTM cell performs three primary operations at every single timestep (t):

1. The Forget Gate:

This gate looks at the previous hidden state (h_t-1) and the current input (x_t), and outputs a number between 0 and 1 for each number in the cell state. A 1 means “keep this completely,” while a 0 means “forget this entirely.”

f_t = σ(W_f · [h_t-1, x_t] + b_f)

2. The Input Gate:

This gate decides what new information we are going to store in the cell state. It creates a vector of new candidate values (C_t) that could be added to the state.

i_t = σ(W_i · [h_t-1, x_t] + b_i)

3. The Output Gate:

Finally, the network decides what it is going to output as the new hidden state (h_t), based on a filtered version of the newly updated cell state.

o_t = σ(W_o · [h_t-1, x_t] + b_o)

By orchestrating these gates, the LSTM can learn to hold onto a critical piece of information (like a massive spoof order) for 100 timesteps, ignoring all the irrelevant noise in between, until it sees the trigger event that completes the pattern.

3. The 3D Tensor: Reshaping Reality for Deep Learning

This is the exact point where 90% of data scientists transitioning into quantitative finance hit a brick wall.

When we trained our LightGBM model in Post 11, our data was a 2D matrix (a standard spreadsheet). It had Rows (Samples) and Columns (Features like OBI, Micro-Price).

LSTMs cannot process 2D data. Because they require a sequence, we must transform our data into a 3D Tensor. The dimensions of this tensor are (Samples, Timesteps, Features).

- Samples: The total number of training examples (e.g., 100,000 rows).

- Timesteps: The “Look-back Window.” How many previous ticks should the LSTM look at to make one prediction? Let us choose 50 timesteps.

- Features: The number of engineered data points per tick (e.g., 10 features).

If our original 2D dataset has 100,000 rows and 10 features, reshaping it for an LSTM with a 50-timestep look-back window means we are creating 100,000 “mini-movies.” Each mini-movie contains the current tick and the 49 ticks that preceded it.

Here is the Python implementation using numpy to forge this 3D Tensor:

Python

import numpy as np

def create_lstm_tensor(features_df, target_series, time_steps=50):

"""

Transforms a 2D Pandas DataFrame into a 3D Numpy Tensor for LSTM ingestion.

"""

X_3d, y_aligned = [], []

# Convert dataframe to raw numpy array for speed

data_array = features_df.values

target_array = target_series.values

# Slide a window across the data to create sequences

for i in range(len(data_array) - time_steps):

# Extract a 'mini-movie' of length 'time_steps'

sequence = data_array[i : i + time_steps]

X_3d.append(sequence)

# The target is the label at the END of the sequence

y_aligned.append(target_array[i + time_steps])

return np.array(X_3d), np.array(y_aligned)

# Usage:

# X_tensor, y_tensor = create_lstm_tensor(X_train, y_train, time_steps=50)

# Shape of X_tensor will be: (Samples, 50, 10)

This single block of code is the bridge between flat tabular data and deep sequential learning.

4. Architecting the Deep Quant Network in Keras

With our 3D Tensor prepared, we can now architect the neural network using TensorFlow/Keras. In high-frequency trading, we do not want a massive, 100-layer behemoth. Inference latency is critical. We want a sleek, highly optimized network that prevents overfitting.

Python

from tensorflow.keras.models import Sequential

from tensorflow.keras.layers import LSTM, Dense, Dropout, BatchNormalization

from tensorflow.keras.optimizers import Adam

def build_quant_lstm(time_steps, num_features):

"""

Constructs a streamlined LSTM architecture for order book pattern recognition.

"""

model = Sequential()

# Layer 1: The Sequence Reader

# return_sequences=False because we only want the final interpretation of the 50 ticks

model.add(LSTM(units=64,

input_shape=(time_steps, num_features),

return_sequences=False))

# Regularization: Prevent the network from memorizing the noise

model.add(BatchNormalization())

model.add(Dropout(0.3)) # Randomly kill 30% of neurons during training

# Layer 2: The Logic Interpreter

model.add(Dense(units=32, activation='relu'))

model.add(Dropout(0.2))

# Output Layer: Binary Classification (0 or 1)

# Sigmoid activation squashes the output into a probability between 0.0 and 1.0

model.add(Dense(units=1, activation='sigmoid'))

# Compile the Engine

optimizer = Adam(learning_rate=0.001)

model.compile(optimizer=optimizer,

loss='binary_crossentropy',

metrics=['AUC'])

return model

The Philosophy of Dropout

Notice the Dropout layers. Financial data is the noisiest dataset on earth. If you give a neural network enough capacity, it will memorize the exact sequence of a random Tuesday in 2023. By randomly “dropping out” (deactivating) 30% of the neurons during every training pass, we force the network to distribute its learning. It cannot rely on a single, hyper-specific neuron to make a decision. This mathematically enforces generalized learning, drastically improving out-of-sample performance.

5. The Hardware Reality: CPU vs. GPU Inference

Training an LSTM requires a GPU (Graphics Processing Unit). The matrix multiplications involved in updating the gates across thousands of epochs will take days on a standard CPU, but only minutes on an NVIDIA CUDA-enabled GPU.

However, Inference (making a live prediction in production) is a different story.

When your bot receives a single WebSocket update, it only needs to process one 50-tick sequence. Pushing a single sequence from the CPU RAM to the GPU VRAM, performing the calculation, and retrieving the result often takes longer (due to PCI-E bus latency) than just calculating it directly on the CPU.

In professional high-frequency environments, LSTMs are trained offline on massive GPU clusters, but the compiled model is often deployed on highly optimized, dedicated CPU threads (using libraries like ONNX Runtime or Intel OpenVINO) to achieve microsecond inference times.

Conclusion: The Expansion of Perception

By integrating an LSTM network into your quantitative architecture, you have fundamentally altered how your system perceives reality.

Your original Python bot (Season 2) was a reactionary machine. It saw a Z-score, and it executed. Your LightGBM model (Season 3, Post 11) was a tactical machine. It saw a complex snapshot, and it executed.

But your Deep Learning LSTM is a strategic entity. It watches. It remembers. It waits for the narrative of the order book to unfold, recognizing the invisible footprints of institutional algorithms before striking with mathematical precision.

We now possess two distinctly different, highly powerful AI brains:

- The lightning-fast, tabular logic of the LightGBM.

- The deep, sequential pattern recognition of the LSTM.

Which one should we use in live trading? The answer is: Both.

In Post 14, we will explore the apex of machine learning architecture: The Ensemble. We will learn how to mathematically fuse the predictions of our statistical models, our tree models, and our deep learning models into a single, undeniable “Master Signal” that maximizes our Sharpe Ratio and crushes false positives.

The models are trained. The memory is active. Now, we unite them.

Stay tuned for Post 14.