Welcome back to Nova Quant Lab.

In our previous session, we laid the theoretical groundwork for Season 3. We discussed the transition from classical, linear statistical models to the non-linear, hyper-dimensional realm of Machine Learning (ML). We introduced the concept of the Order Book Imbalance (OBI) and established that feeding raw price data into an ML model is a recipe for catastrophic overfitting.

To an AI model like LightGBM, the raw market does not exist. The model only knows what you show it. If you show it garbage, it will learn garbage. If you show it highly predictive, mathematically normalized representations of market micro-structure, it will learn to predict the future.

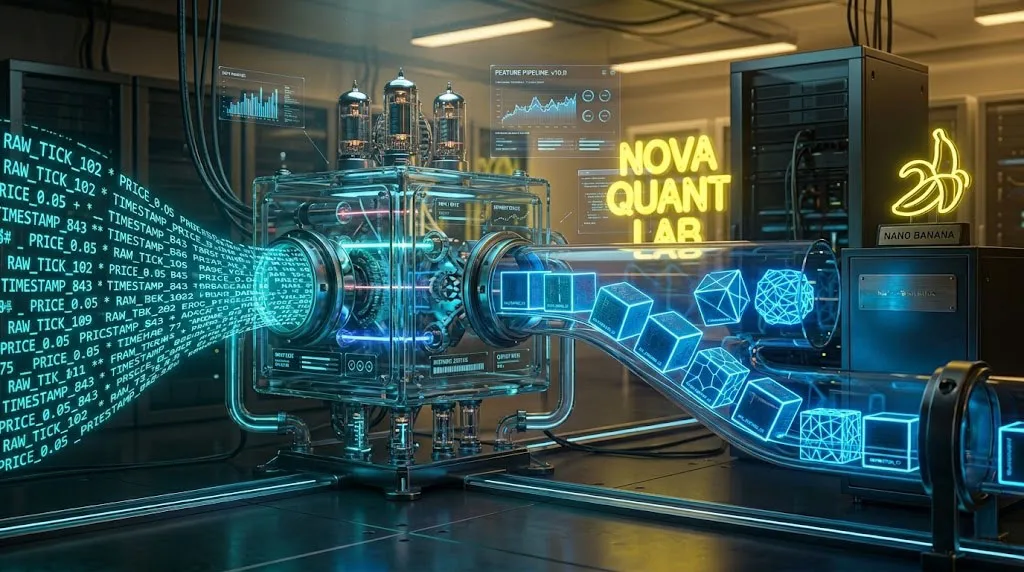

Today, we transition from theory to code. We are going to build the Real-Time Feature Engineering Pipeline.

This pipeline acts as the translation layer between the raw, chaotic WebSocket data pouring in from Binance or Bybit, and the structured numerical arrays required by our machine learning inference engine. We will write the Python code to calculate depth-weighted OBI, the Micro-Price, and rolling momentum features, all optimized for microsecond latency.

1. The Raw Material: Anatomy of an L2 WebSocket Payload

Before we can engineer features, we must understand our raw material. If you followed our Asynchronous Ingestion guide in Season 2 (Post 2), your ccxt.pro WebSocket connection is constantly pushing Level 2 (L2) order book updates.

A standard JSON payload for an L2 update looks roughly like this:

JSON

{

"symbol": "BTC/USDT",

"timestamp": 1690890123456,

"bids": [

[60000.0, 1.5],

[59999.5, 2.0],

[59998.0, 0.5]

],

"asks": [

[60000.5, 0.8],

[60001.0, 3.0],

[60002.5, 1.2]

]

}

This data represents the “Resting Liquidity”—limit orders waiting to be filled. The naive approach is to flatten this JSON and feed the prices and volumes directly into an ML model. This fails because the price of Bitcoin is non-stationary (it can range from $10,000 to $100,000). A model trained on $60,000 data will panic when it sees $80,000.

We must transform these absolute prices and volumes into Stationary Relational Features.

2. Engineering the Order Book Imbalance (OBI) in Python

In Post 9, we defined the Order Book Imbalance (OBI) as the ratio of bid volume to ask volume. However, a professional quant does not simply look at the “Best Bid” and “Best Ask” (Level 1). Institutional spoofing algorithms constantly flash fake orders at Level 1 to manipulate retail bots.

To find the true intention of the market, we must calculate a Depth-Weighted OBI. We will aggregate the volume across the top $N$ levels of the order book, giving more weight to orders closer to the current price.

Here is the robust Python implementation:

Python

import numpy as np

class FeatureEngineer:

def __init__(self, depth_levels=10):

"""

Initializes the Feature Engineer.

depth_levels: How many levels of the order book to analyze.

"""

self.depth_levels = depth_levels

def calculate_obi(self, bids, asks):

"""

Calculates the Depth-Weighted Order Book Imbalance.

Returns a value between -1.0 (Extreme Sell Pressure) and +1.0 (Extreme Buy Pressure).

"""

if not bids or not asks:

return 0.0

total_bid_weight = 0.0

total_ask_weight = 0.0

# Determine the mid-price to calculate distance-based weighting

best_bid_price = bids[0][0]

best_ask_price = asks[0][0]

mid_price = (best_bid_price + best_ask_price) / 2.0

# Aggregate Bid Weights (Volume / Distance from Mid-Price)

for i in range(min(self.depth_levels, len(bids))):

price, volume = bids[i]

distance = abs(mid_price - price) / mid_price

# Add a tiny epsilon to prevent division by zero

weight = volume / (distance + 1e-6)

total_bid_weight += weight

# Aggregate Ask Weights

for i in range(min(self.depth_levels, len(asks))):

price, volume = asks[i]

distance = abs(price - mid_price) / mid_price

weight = volume / (distance + 1e-6)

total_ask_weight += weight

# Calculate Normalized OBI

obi = (total_bid_weight - total_ask_weight) / (total_bid_weight + total_ask_weight + 1e-8)

return obi

By dividing the volume by its distance from the mid-price, this code heavily penalizes “spoof” orders placed far away from the active trading zone, providing your ML model with a highly accurate representation of immediate buy/sell pressure.

3. Unmasking the True Price: The Micro-Price

The “Last Traded Price” displayed on your exchange GUI is historically lagging. Even the “Mid-Price” (the exact average of the best bid and best ask) is flawed because it ignores the weight of the liquidity.

If the Best Bid is $60,000 with 100 BTC resting, and the Best Ask is $60,001 with only 1 BTC resting, the Mid-Price is $60,000.50. However, common sense dictates that the 1 BTC wall will be crushed instantly by a market buyer, making the “true” price much closer to $60,001.

To feed our ML model the true cost of execution, we calculate the Volume-Weighted Micro-Price.

[ Formula: The Micro-Price ]

Micro-Price = (Best_Bid_Price × Best_Ask_Vol + Best_Ask_Price × Best_Bid_Vol) ÷ (Best_Bid_Vol + Best_Ask_Vol)

Notice the cross-multiplication. We multiply the Bid Price by the Ask Volume. This mathematical inversion ensures that if the Bid Volume is massively larger than the Ask Volume, the Micro-Price will shift closer to the Ask Price, reflecting the impending upward breakout.

Let’s add this to our FeatureEngineer class:

Python

def calculate_micro_price(self, bids, asks):

"""

Calculates the Volume-Weighted Micro-Price from Level 1 data.

"""

if not bids or not asks:

return None

best_bid_price, best_bid_vol = bids[0]

best_ask_price, best_ask_vol = asks[0]

total_vol = best_bid_vol + best_ask_vol

if total_vol == 0:

return (best_bid_price + best_ask_price) / 2.0

micro_price = (best_bid_price * best_ask_vol + best_ask_price * best_bid_vol) / total_vol

return micro_price

4. Providing Temporal Context: High-Speed Rolling Memory

Here is a critical architectural concept: Gradient Boosted Trees (like LightGBM) are “stateless.” When you feed a LightGBM model an OBI of +0.5, it has no idea what the OBI was one second ago. It cannot see momentum.

If the OBI is +0.5, is it building up from 0.0 (bullish momentum), or is it collapsing down from +0.9 (bullish exhaustion)? The model needs to know.

To solve this, we must engineer Temporal Features—rolling means, standard deviations, and rates of change. However, calculating a rolling mean using the pandas library inside a high-frequency asynchronous loop is a fatal error. pandas is incredibly slow for row-by-row streaming data.

Instead, a professional quant utilizes Python’s built-in collections.deque (Double-Ended Queue). A deque allows for $O(1)$ time complexity for appending and popping data, making it the perfect lightweight memory buffer for HFT bots.

Python

from collections import deque

import statistics

class FeatureEngineer:

def __init__(self, depth_levels=10, memory_ticks=50):

self.depth_levels = depth_levels

# Create ultra-fast fixed-length memory buffers

self.obi_history = deque(maxlen=memory_ticks)

self.micro_price_history = deque(maxlen=memory_ticks)

def update_and_extract_features(self, bids, asks):

"""

The main pipeline method. Called every time a WebSocket update arrives.

Returns a dictionary of engineered features ready for ML inference.

"""

current_obi = self.calculate_obi(bids, asks)

current_mp = self.calculate_micro_price(bids, asks)

# Append to memory buffers

self.obi_history.append(current_obi)

self.micro_price_history.append(current_mp)

# We need the buffer to be full before calculating rolling stats

if len(self.obi_history) < self.obi_history.maxlen:

return None # Not enough data yet

# 1. Calculate Rolling Mean of OBI

rolling_mean_obi = statistics.fmean(self.obi_history)

# 2. Calculate OBI Momentum (Current vs Mean)

obi_momentum = current_obi - rolling_mean_obi

# 3. Calculate Micro-Price Returns (Volatility indicator)

mp_return = (self.micro_price_history[-1] - self.micro_price_history[0]) / self.micro_price_history[0]

# Compile the final Feature Vector for the ML Model

feature_vector = {

'obi_instant': current_obi,

'obi_rolling_mean': rolling_mean_obi,

'obi_momentum': obi_momentum,

'micro_price_return_50ticks': mp_return

}

return feature_vector

5. The Architecture of Real-Time Inference

Let us visualize where this FeatureEngineer lives within the architecture we built in Season 2.

The WebSocket listener (Post 2) receives a JSON payload. Instead of immediately triggering an execution, it hands the bids and asks to the FeatureEngineer. The engineer updates its ultra-fast deque memory, calculates the Micro-Price, the depth-weighted OBI, and the rolling momentum. It then packages these numbers into a clean feature_vector.

This vector is then passed to our Machine Learning model for real-time inference:

prediction = lightgbm_model.predict(feature_vector)

If the model predicts a high probability of a bullish micro-breakout within the next 500 milliseconds, it passes a “Go” signal to the SignalOrchestrator (Post 4), which finally triggers the asyncio.gather atomic execution (Post 3).

By structuring our code this way, we maintain a strict Separation of Concerns. Our data ingestion logic does not care about machine learning, and our machine learning model does not care about exchange API limits. They communicate through the universal language of engineered features.

Conclusion: The Alchemist’s Gold

Data is the oil of the 21st century, but raw oil cannot power a high-performance engine. It must be refined.

In this post, you have built the refinery. You have learned how to transform chaotic, spoof-ridden order books into clean, stationary, and hyper-predictive mathematical signals using pure, highly-optimized Python. You have learned why pandas will kill your execution speed, and why the deque is the quant’s best friend.

We now have a continuous stream of high-quality features flowing through our system. The next logical step is to build the brain that will consume these features.

In Post 11, we will leave the real-time execution loop for a moment and step into the research laboratory. We will take a massive historical dataset of these engineered features and train our very first LightGBM (Gradient Boosted Tree) Model. We will look at hyperparameter tuning, feature importance plots, and how to teach the algorithm to predict the un-predictable.

The data is refined. The pipeline is flowing. Now, we build the intelligence.

Stay tuned for Post 11.