Welcome back to Nova Quant Lab, and welcome to the highly anticipated Season 3.

If you have survived the crucible of Season 2, you are no longer a retail trader. You are the architect of a robust, automated quantitative infrastructure. You have built a Python-based execution engine capable of atomic concurrency, you have deployed it on a hardened 24GB cloud server, and you have mastered the classical statistical arts of mean reversion and cointegration. Your systems are logical, deterministic, and mathematically sound.

But in the apex predator environment of global financial markets, classical statistics are only the baseline. Traditional linear models—like the Ordinary Least Squares (OLS) regression we used in Post 8—assume that the market behaves in a straight line. They assume that variables interact in a predictable, constant manner.

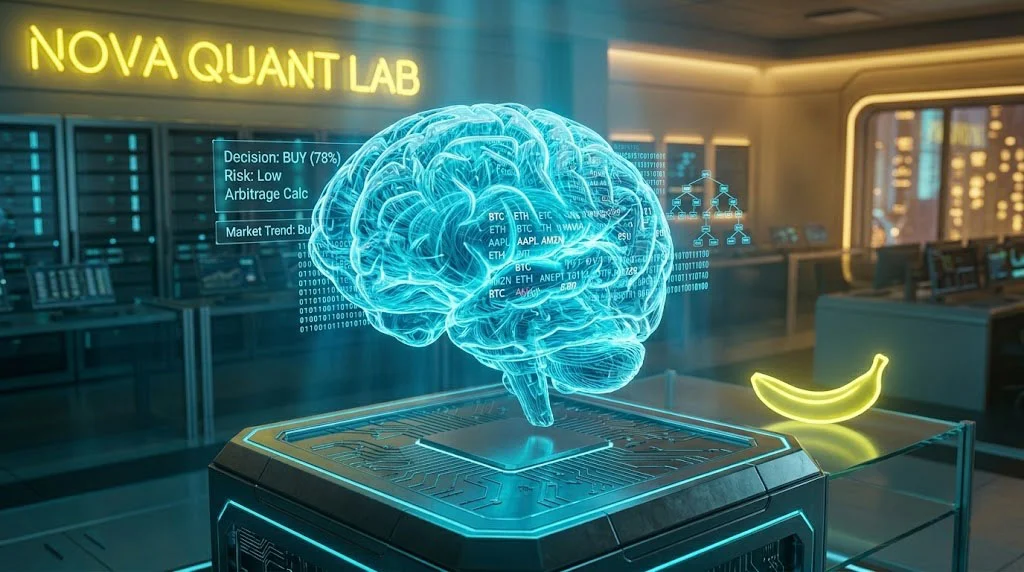

The harsh reality is that the cryptocurrency market is highly non-linear, infinitely complex, and notoriously noisy. To find the alpha that classical statistics leave behind, we must evolve our system’s brain from a simple calculator into a pattern-recognition engine. We must enter the era of Artificial Intelligence and Machine Learning (AI/ML).

In Post 9, the premiere of Season 3, we will explore why machine learning is replacing traditional models, how to engineer “Features” from raw order book data, and how to avoid the deadly traps of overfitting in financial time-series prediction.

1. The Limits of Linearity: Why Traditional Math is Not Enough

Classical quantitative finance relies heavily on linear models. When we calculated the Hedge Ratio in Season 2, we drew a straight line of best fit through a scatter plot of historical prices. If the market moves exactly as it did in the past, a linear model is perfect.

However, market dynamics change constantly due to hidden variables: macroeconomic news, shifts in exchange leverage limits, or the sudden activation of a massive institutional algorithmic seller. These events create “Regime Shifts.” A linear model cannot adapt to a regime shift quickly; it simply sees it as an “error” or a massive Z-Score deviation, which might trigger your Kill-Switch.

Machine Learning models, specifically non-linear algorithms, do not force the data into a straight line. Instead, they build highly complex, multi-dimensional decision trees that can learn conditional relationships. For example, an ML model can learn: “If the spread is wide AND the Bitcoin order book is heavily skewed toward sellers AND the funding rate is negative, THEN the probability of mean-reversion drops from 80% to 20%.”

Classical statistics tell you what happened. Machine learning predicts what is going to happen next.

2. Feature Engineering: The True Currency of AI

There is a dangerous myth in the retail trading community that you can simply feed raw price data (Open, High, Low, Close) into a Neural Network, and it will magically print money. In the quantitative industry, this is known as “Garbage In, Garbage Out” (GIGO).

Machine Learning models are incredibly powerful pattern matchers, but they are blind to context. If you want the model to predict the short-term direction of the market, you must engineer mathematical inputs that capture the actual micro-structure of the market. We call these inputs Features.

In high-frequency and mid-frequency arbitrage, the most predictive features do not come from the price chart; they come from the Level 2 Order Book.

The Order Book Imbalance (OBI)

When buyers are aggressive, they don’t just execute market orders; they stack massive Limit Buy orders close to the current price, creating a “wall” of liquidity. When sellers are aggressive, they stack Limit Sell orders. The ratio between these two walls is a highly predictive feature called the Order Book Imbalance (OBI).

OBI = [Bid_Volume – Ask_Volume] / [Bid_Volume + Ask_Volume]

Where Bid_Volume is the total sum of resting buy orders within a specific percentage of the current price (e.g., 0.1%), and Ask_Volume is the total sum of resting sell orders within the same range.

If the OBI is +0.8, it means 90% of the nearby liquidity is comprised of buyers. This massive imbalance creates an upward “micro-price momentum.” If your Statistical Arbitrage Z-Score says “Short the Spread,” but your ML model sees an OBI of +0.8, the ML model will override the linear model and tell the Execution Engine to wait, saving you from being run over by a bullish freight train.

Other critical engineered features include:

- Trade Flow Imbalance (TFI): The ratio of aggressive Taker Buy volume vs. Taker Sell volume over the last 100 milliseconds.

- Spread Velocity: The rate of change (first derivative) of the Z-score, telling the model how fast the mean reversion is accelerating.

3. Choosing the Right Brain: Trees vs. Neural Networks

When developers first transition into AI, they immediately gravitate toward Deep Learning and Recurrent Neural Networks (like LSTMs) because they are the cutting edge of AI technology.

However, in tabular financial data (rows of engineered features and columns of timestamps), Deep Neural Networks are often the wrong tool for the job. They require massive amounts of data, are prone to extreme overfitting, and, most importantly, they are a “Black Box.” If a Neural Network loses $10,000, it is nearly impossible to ask it why it made that decision. Furthermore, forward-passing data through a massive neural network introduces execution latency.

The professional quant’s weapon of choice is Gradient Boosted Decision Trees (GBDT), specifically libraries like XGBoost or LightGBM.

Why LightGBM Dominates Arbitrage

LightGBM builds a sequence of decision trees, where each new tree specifically tries to correct the errors made by the previous trees.

- Speed: LightGBM is blazingly fast. It can evaluate a new row of order book data and output a prediction in microseconds, perfectly aligning with the low-latency Execution Engine we built in Post 3.

- Explainability: Tree-based models allow us to calculate “Feature Importance.” We can run a command in Python and see exactly which mathematical feature is driving the predictions. If the model says OBI is responsible for 40% of the prediction accuracy, we know our market micro-structure theory is correct.

- Non-Linearity: It easily captures the complex, conditional logic of the order book without the need for massive GPU clusters.

4. The Deadly Trap: Purged Time-Series Cross-Validation

If you train a machine learning model on financial data using standard data science techniques, your model will show a 95% accuracy rate in testing, and then it will immediately lose all your money in live production. Why? Because of Look-Ahead Bias and Data Leakage.

In standard ML (like predicting whether an image is a cat or a dog), you randomly shuffle your data and split it into 80% Training and 20% Testing. You cannot do this in finance. Time is sequential. If you randomly shuffle price data, your model will accidentally “see” the future price of Wednesday while trying to predict the price of Monday.

Furthermore, financial features have high autocorrelation (they are sticky). If a large institutional buyer is accumulating Bitcoin, the Order Book Imbalance will be highly positive from 10:00 AM to 10:30 AM. If you split your data at 10:15 AM, the training set and the testing set will share the exact same underlying event. The model isn’t learning to predict; it is just memorizing the institutional buyer.

To solve this, professional quants use a technique popularized by Dr. Marcos Lopez de Prado called Purged K-Fold Cross-Validation.

When we split our time-series data into training and testing blocks, we must insert a “Purge Window” (a gap of time) between the two sets. This gap ensures that any ongoing market events (like an open trade or a lingering order book imbalance) have completely finished before the testing data begins. By strictly separating the past from the future, we force the ML model to actually learn the underlying mechanics of the market, ensuring that its predictions hold up in the unforgiving environment of live trading.

5. The Architecture of an ML-Driven Bot

Integrating Machine Learning does not mean deleting the infrastructure we built in Season 2. Rather, the ML model acts as an elite filter layered on top of our existing Signal Orchestrator.

The architecture flows as follows:

- Ingestion: The WebSockets continue streaming L2 Order Book data.

- Feature Engineering Module: A new Python class transforms the raw bids and asks into OBI, TFI, and Spread Velocity in real-time.

- The ML Inference Engine: The engineered features are passed into our pre-trained LightGBM model. The model outputs a probability score between 0 and 1 (e.g., “There is an 82% probability the spread will narrow in the next 5 seconds”).

- The Execution Gate: If the Statistical Z-Score is > 2.0 AND the ML Probability Score is > 0.80, the atomic orders are dispatched.

By demanding consensus between classical statistics and modern machine learning, we drastically reduce our false positive rate, preserving capital and maximizing our Sharpe Ratio.

Conclusion: The Machine is Learning

We have officially breached the frontier of Season 3. We are no longer simply coding rules; we are training our systems to recognize the invisible mechanics of liquidity.

Machine learning is not a magic bullet that replaces good risk management. It is a highly sophisticated magnifying glass that allows us to see the micro-structure of the market clearer than any human ever could.

In Post 10, we will move from theory to code. We will open our Jupyter Notebooks and build a complete Feature Engineering pipeline. We will write the Python logic to extract the Order Book Imbalance (OBI) from raw CCXT data and prepare our dataset for the LightGBM algorithm.

The baseline is set. The data is flowing. Now, we teach the machine to think.

Stay tuned for Post 10.